Full body motion controller

for Mobile VR / AR / SmartTV / IoT

Play motion controlled VR games with

Samsung Gear VR, Google Daydream or any other Android or iOS HMDs!

VicoVR is a Bluetooth accessory that provides Wireless Full Body and Positional Tracking to Android and iOS smart devices. Without a PC, wires, or wearable sensors!

Wireless Full

Body Tracking

Positional Tracking

of Mobile HMD

SDK

(Unity 3D, UE4)

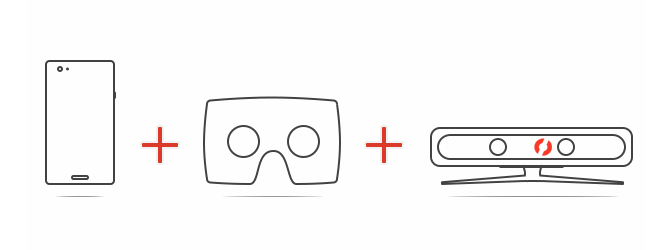

Motion Gaming in Mobile VR

Play free VR games with full body interactivity!

VicoVR is a Bluetooth accessory that allows you to play motion games in Mobile Virtual Reality (VR). No PC, no wires, no wearable sensors! All you need is VicoVR and Mobile VR headset.

Compatible with Samsung Gear VR, Google Daydream, iPhone and any other Android or iOS headsets!

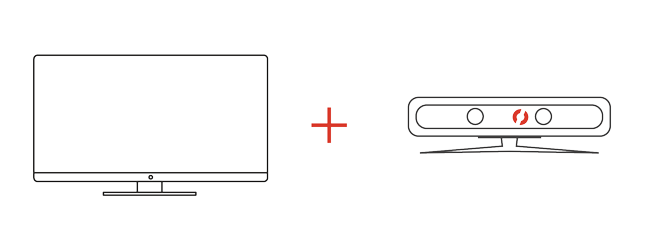

Motion Gaming on Android TV and Apple TV

Play free SmartTV games with full body interactivity!

Compatible with AndroidTV, AppleTV! VicoVR is a Bluetooth accessory that allows you to play motion games on your TV without a PC, a gaming console or wearable sensors. All you need is VicoVR and a SmartTV!

Coming Soon

Develop Android and iOS apps with full body interactivity

Create mobile apps with full body interactivity using VicoVR SDK (Unity3D, UE4).

VicoVR is a Bluetooth accessory that provides gesture recognition and positional tracking to Android, iOS VR / AR / SmartTV / IoT devices.

What makes VicoVR special is its vision processor that enables tracking of the human body in real time - without a PC, wires, or wearable sensors! Visit Developers corner for more info.